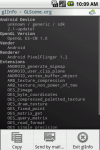

Hello WebGL source code

With SmartMS v1.0.1 out you can now use WebGL!

With SmartMS v1.0.1 out you can now use WebGL!

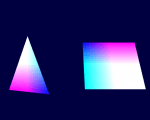

Download HelloWebGL.zip (51 kB), it contains the first demo (in .opp form and pre-compiled), as well as the initial WebGLScene units which you’ll have to copy to your “Libraries” folder (the WebGL import units should have been delivered in v1.0.1).

- GLS.Vectors: contains vector manipulations types as well as a collection of helpers to operate on them.

- GLS.Base: contains Pascal classes to simplify basic OpenGL tasks revolving around buffers and shaders.